Artificial intelligence (AI) in healthcare is one of the most talked-about trends in modern medicine and analytics. But amid soaring expectations and glossy vendor pitches, it’s easy to lose sight of what AI actually delivers today versus what it promises for tomorrow.

In real clinical and operational environments, the value of AI shows up clearly in well-defined use cases like predictive risk scoring and natural language processing (NLP) — yet the technology also has real limits that healthcare organizations need to understand before they invest. This post breaks down where AI is genuinely helping health systems, where the hype still outpaces reality, and how pragmatic healthcare analytics teams can make smart, measurable decisions about AI adoption.

What We Mean by “AI” in Healthcare Analytics

“AI” is often used as a catch-all term, but in healthcare analytics it generally refers to techniques that go beyond traditional rule-based reporting — particularly machine learning (ML), predictive modeling, and NLP.

In practice:

- Predictive analytics use statistical and ML models to forecast future outcomes, such as readmission risk or deterioration.

- Natural language processing (NLP) digests unstructured clinical text (notes, reports, narratives) to derive structured insights.

- Generative models and large language models (LLMs) can draft text, summarize evidence, or help clinicians explore clinical questions.

These tools analyze vast datasets — including EHRs, labs, claims, and unstructured notes — to find patterns and predictions that traditional analytics might miss.

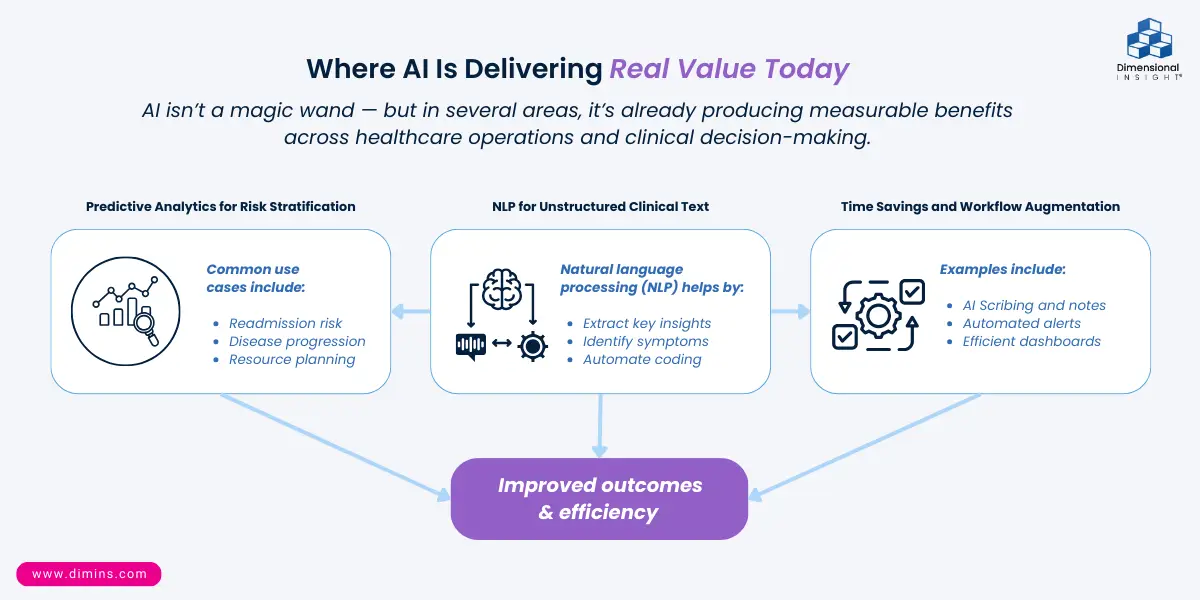

Where AI Is Delivering Real Value Today

AI is not a magic wand — but in several areas, it’s already producing measurable benefits:

- Predictive Analytics for Risk Stratification

One of the best-documented applications of AI in healthcare is predictive modeling — where historical patient data is used to forecast future events.

Examples include:

- Readmission risk assessment, helping care teams prioritize follow-up and discharge planning.

- Disease progression forecasts that support proactive interventions.

- Resource planning and staffing forecasts that smooth clinical operations.

Early adopters report reductions in avoidable readmissions and more proactive care interventions — outcomes that directly tie to quality and cost performance.

Why this works: Predictive models uncover complex patterns across multiple data elements — something rules-based dashboards can’t do — and they do so in near real-time.

- NLP for Unstructured Clinical Text

Clinical notes, consult reports, discharge summaries, and narratives are rich with insight — if you can extract it.

NLP systems:

- Turn free-text notes into structured insights (e.g., risk factors, symptom clusters).

- Support quality reporting and documentation improvement.

- Reduce administrative burden by automating coding helpers or summary creation.

This means analysts and clinicians spend less time parsing text and more time acting on what it actually says.

- Time Savings and Workflow Augmentation

AI also contributes value by automating routine tasks and amplifying human effort:

- Scribing and documentation support reduces clinician burnout.

- Automated alerts flag trends sooner.

- Dashboards refresh with predictive insights without manual intervention.

These enhancements don’t replace human judgment — they extend it, allowing teams to focus on decision-making rather than data grunt work.

Where the Hype Still Outpaces Reality

For all its promise, AI in healthcare still faces fundamental challenges.

- Bias and Equity

AI models are only as good as the data they’re trained on. When training data reflects historical inequities — for example, underrepresentation of certain demographic groups — models can perpetuate or even worsen disparities.

Bias can arise at multiple stages: data collection, model development, evaluation, and deployment. If not checked, biased models can lead to uneven performance across subpopulations.

- Explainability and Trust

Many high-performing models — especially deep learning “black boxes” — are difficult for clinicians to interpret. This matters because:

- Clinical decisions impact human lives; providers need insight into why a model made a recommendation.

- Transparency drives trust and adoption, especially when workflows are high-stakes.

- Regulatory and ethical expectations increasingly require explainability.

Explainable AI remains a vibrant research field because clinicians must be able to reason about model outputs, not just consume them.

- Integration and Implementation Challenges

AI isn’t plug-and-play. Successful implementation depends on:

- Quality data pipelines and integration across disparate systems.

- Alignment with clinical workflows rather than adding extra clicks.

- Change management that brings clinicians and analysts along the adoption curve.

Many projects that “look good in demos” struggle to show value when they hit live clinical settings — not because the models fail, but because they don’t fit daily practice.

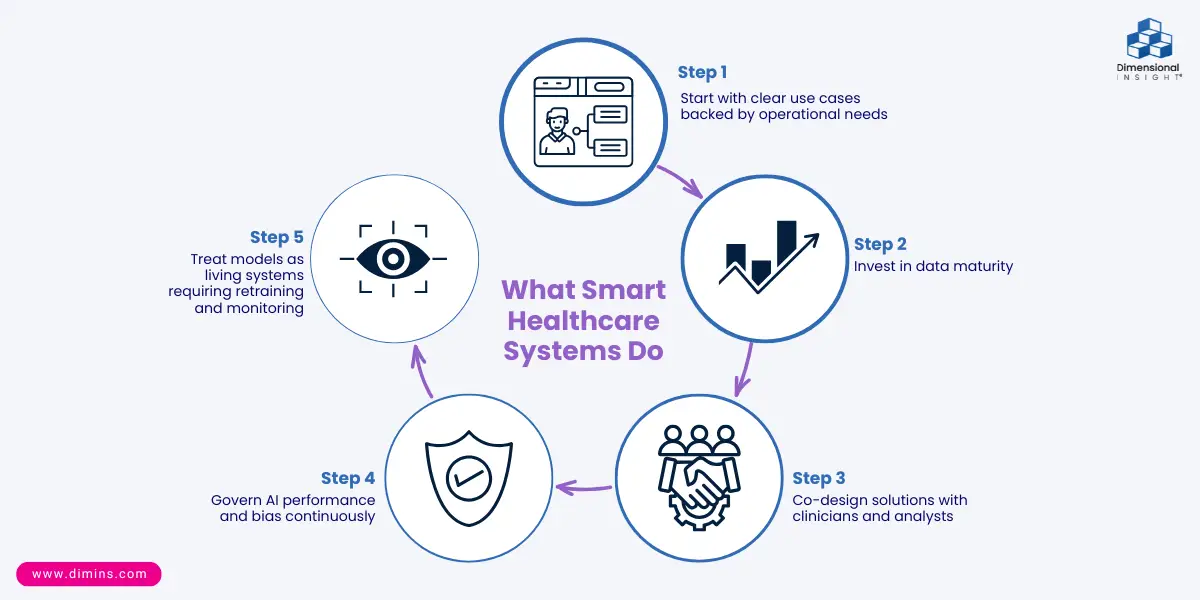

Realistic Expectations: What Smart Healthcare Systems Do

Healthcare leaders who succeed with AI differentiate strategic help from shiny promises. They:

- Start with clear use cases backed by operational needs (e.g., targeted readmission reduction, workforce planning).

- Invest in data maturity — clean, unified data is mandatory for reliable AI outcomes.

- Co-design solutions with clinicians and analysts so models support real workflows.

- Govern AI performance and bias continuously, not once at launch.

- Treat models as living systems requiring retraining and monitoring.

This grounded approach yields measurable outcomes, not just hype.

Connecting AI to Dimensional Insight’s Analytics Philosophy

At Dimensional Insight, we view AI not as a standalone buzzword but as an extension of a broader analytics strategy focused on:

- Explainability first: Analysts and clinicians need to understand the “why” behind every signal, not just see predictions.

- Workflow-aligned insights: AI outputs reinforce, not interrupt, decision processes.

- Data integrity as the foundation: A robust data warehouse and governance framework is the precondition for any advanced analytics — including AI.

Our focus is on practical help: supporting healthcare teams with tools that enhance confidence, clarity, and care quality, rather than chasing the next flashy algorithm.

Conclusion: AI’s Best Path Forward in Healthcare Analytics

There’s no doubt AI will continue to transform healthcare. But its most powerful contributions are not in out-of-the-box hype — they’re in pragmatic, measurable applications that respect clinical workflows, data realities, and human judgment.

For healthcare organizations committed to evidence over emotion, AI becomes a tool that amplifies insight — not a magic solution that replaces human expertise.

The help that matters isn’t the loudest promise — it’s the one that’s proven to work.

- How GLP-1 Drugs Are Impacting Beverage Alcohol Sales - April 21, 2026

- Why Healthcare Reports Don’t Match (And What It’s Costing You) - April 14, 2026

- What Drives On-Premise Spirits Sales? Lessons from Bartenders, Operators, and Brands - March 17, 2026