Workbench® Visual Integrator™ (VI) has rich support for different types of file encoding. When you have input from multiple sources, you’ll want to make sure that the file encoding matches each of your input files. Just look at a VI script Filein input object, and you can see that it supports these encodings: auto, ascii, gb 18030, latin1, utf-8, unicode, unicode-be, and unicode-le. Likewise, Builder™ and Spectre™ support these encodings, too.

Twitter: What You Need to Know about Encoding

Admittedly, this topic is pretty geeky, but does it pique your interest to plunge into the esoteric history of digital file encoding? What are each of these options? And, which do you choose? To answer these questions, to understand why different types of encoding are used, and to confidently make the right choices for your data, let’s start at the beginning.

In the beginning…

Any text in digital form has some form of coding, a translation of the bits and bytes in the file to actual characters and digits. Under normal circumstances any block of bytes is meaningless unless the code is apparent or given.

In the digital beginning, the range of characters that needed encoding was small, only the letters of the Latin alphabet or even just the uppercases. They all fitted within one byte of 8 bits. One of the first encodings, devised by IBM was EBCDIC from the early sixties of the previous century. Very weird if you’re used to ASCII, but EBCDIC is still alive in Mainframes and AS/400s today. The code cut the byte in two, using one half to denote the variant and the other to code the characters.

Later there was ASCII (ANSI in Microsoft terminology). ASCII uses 7 bits in a byte. The first bit is set to 0. So decimally speaking the codes 0-127 are used. If the first bit is one (128-255), it’s called extended ASCII. At the start, these ´high’ ASCII characters were the home of specials like Ç and symbols such as the following:

![]()

which enabled a programmer to draw intricate user screens, before the advent of Graphical Interfaces. Even they are not altogether dead nowadays.

However, the place in the attic was freed up and some used the extended part to store other characters like the Greek alphabet or Russian. That is, store Greek characters is not entirely correct. What was stored was still the number 128, but now it did not mean the same thing anymore. It was taken to mean the letter alpha and not Ç.

Code Pages

In time, a lot of these “sets of meanings” of the extended part were devised, and they became known as “Code Pages”. It did not change the actual value of the bytes of computer files, just the way they were rendered by interface systems.

The code pages were standardized, and they were numbered and named. Several times. There are CP-numbers, ISO numbers, and names like the familiar latin1, AKA ISO-8895-1, AKA CP1252. This latin1 Code Page only uses a certain range of the possible bits, and in the attic are the diacritical character combinations commonly used in European writing systems (not languages!), including Þ and ß.

As handy and simple as the Code Page system is, there were several problems with it. First, it was very difficult to have both Greek and, for example, Hebrew in one text (which if you are a Bible scholar is problematic) or Russian and Greek, which must have bothered somebody. But, more pressingly, none of the world writing systems that were non-alphabetic could be fitted. Syllabic and even more word-scripting systems have far too many signs to express their variety in 8 bits. So, a new approach (and even more ISO numbers) were needed. Enter: Unicode.

Unicode

The name bares scrutiny: Unicode as such is not a code, that is, it is not an encoding system. It is a list. A very long list of characters, beginning at 0 and going on like there’s no tomorrow. An absolutely brilliant list can be found at the wonderful website of https://unicode-table.com/en/ which also labels groups of ‘characters’ to a writing system and the languages for which it is used and where in the world. Among my favorites are Cuneiform, Egyptian Hieroglyphs, and the still not deciphered 4000-year-old Linear A script from Crete. But that’s me… The list is not frozen and it changes. We`re on version 11.0 currently.

Encoding

Now take the glyph ![]() (meaning ‘to call’ if I am not misinformed). It is number x1301E or decimal 77854 in the Unicode list. How do we ‘store’ this number in a file? Evidently it won’t fit in a byte. But how will we fit the bits in: 0001-00110000-00011110. We can’t just take three bytes for this, for how would we know that the first byte is part of something bigger? Enter: Unicode-Encoding.

(meaning ‘to call’ if I am not misinformed). It is number x1301E or decimal 77854 in the Unicode list. How do we ‘store’ this number in a file? Evidently it won’t fit in a byte. But how will we fit the bits in: 0001-00110000-00011110. We can’t just take three bytes for this, for how would we know that the first byte is part of something bigger? Enter: Unicode-Encoding.

What was needed was a way to encode these large numbers into bytes (more than one evidently) without having to encode the letter A as 00.00.00.40, in other words use four bytes (or even more when the Unicode list gets extended even further) for every character. That would be rather inefficient.

UTF-8

A clever invention was the UTF-8 encoding. It basically uses one byte if the number will fit in 7 bits and an extra 8 bits for every step above that. Hence UTF-8 – guess how many are used in UTF-16? The reason only 7 bits are used is that we need one bit to signal that there is more to come for this number or character. UTF-8 was so clever, because the Unicode numbers for A-Z and other non-extended ASCII text coincide fully with the ASCII codes. Even the zero first bit is the same. So, a non-extended ASCII text can be taken to be encoded in UTF-8 free of charge. Rumor has it that devising UTF-8 was the only way to get the Americans interested in getting aboard the Unicode train, for evidently the English (and even American) language can get along quite nicely without anything outlandish beyond a-z and A-Z. It’s also important to realize that UTF-16 doesn’t just extend with 16 bits, it also begins in 16 bits. So, the majority of the worlds digital texts, consisting of lower ascii takes up twice the room as it would in UTF-8 (or latin1).

UCS-2

Meanwhile, back at the office, Microsoft had its own ideas. It encoded the UCS (Universal Coded Character Set) which is code-for-code identical (now) to the Unicode list, in UCS-2 encoding. This is much like UTF-16 especially in that it uses two bytes to begin with. This is what, for example, MSSQL uses and why it’s twice as big as any other database using UTF-8. In an extreme effort to create maximum confusion with the least bit of words (or bytes of code), this UCS-2 encoding is sometimes referred to as … “Unicode”. They don’t come any darker… Don’t think Old Bill has a monopoly on remarkable decisions: Big Blue (IBM) has a Code Page number for … UTF-8 encoding (1208). And Diver®, following common denomination uses both. But, we’re ahead of ourselves.

Collation

Another phrase used in connection with character sets and encodings is collation. This is used primarily in systems that need to do something with texts, such as, sorting. Databases use collation. The well-known mysql, being of Swedish descent in its Open Source past, used a default collation of latin1_swedish_ci. It means that, for instance, in ordering text from a column in this collation, the byte sets will be interpreted as latin1 ascii, case insensitive (AaBb) with rules for special Swedish characters. (I suppose that Å comes after A or words to that effect.) I would not like to venture a guess as to how many mysql databases have kept this not so cunning default, as not many will have to support Select text from MuppetScenario where actor=’Swedish Chef’.

Encodings in Integrator

As we mentioned, Diver, or in this case Integrator, supports various encodings. In fact, the list is rather remarkable. They are: auto, ascii, gb 18030, latin1, utf-8, unicode, unicode-be, unicode-le.

Endianness

To start with the last two, unicode-be and unicode-le, we need to enhance our phraseology a bit. Computer files can have a BoM (“Special delivery, did you order one?”), which stands for Byte Order Mark. A BoM is a number of bytes at the beginning of a file with a special meaning that prefixes the data. In general, multi-byte numbers can have different orders in which the bytes are stored. Decimal 259 is stored in two bytes, x01 and x03. The first byte is 256 in ‘value’ and the second 3. So, the first byte is the most significant, highest value for your bit money. For reasons beyond my comprehension, different Processor Families store these bytes in different orders, with either the Most Significant Byte first (MSB) or the Least Significant Byte first (LSB). So, this 259 can be there digitally as x0103 and x0301. The internet uses MSB, and so do Motorola, Sparc, and IBM mainframe processors. Whereas, Apple and Intel use LSB. MSB is also called Big-endian (read as: The Big End comes first), and LSB is Little-endian. If you’re ever programming on bit level: don’t confuse Big-endian to mean, ‘the Big is on the End’.

So, we already saw that Unicode in this context actually means UCS-2. We now know what the specific Unicode-be (big endian) and Unicode-le (little endian) stand for. If ever the Unicode encoding setting in Integrator garbles up a text file, but you know it to be in UCS-2, try any of the Big or Little Endians…

We know latin1, ascii (no attic), and I’m telling you now that GB18030 is the official People’s Republic of China’s code set for both simplified and traditional Chinese characters. That leaves us with auto.

Deriving the encoding

According to the manual, auto “sets the encoding based on the file signature and the Unicode state of other objects in the same task”. That is a very useful characteristic and often it will suffice. But let’s go a step further and see what can be learnt from the file itself by examining it. We have to come back to the BoM once more. These byte marks do not just tell the receiving processor what the byte order is, but also, that the piece is encoded Unicode, and which encoding. So, opening with bytes FF FE is UTF-16 Little Endian, and the other way around FE FF it would be Big Endian. You could call that a give away . Now, there is something special about UTF-8. Because it is a one byte encoding system, a BoM is not necessary for the Order information, but still there is a mark for UTF-8 (EF BB BF). But, its use is not prescribed, and there is a lot of UTF-8 that is not marked as such. Now in the case of UTF-8 and the way multiple byte overflows are encoded, there are numerous non-valid bit combinations. Heuristic testing of a file could ascertain therefore that it is probably NOT encoded in UTF-8, at least not valid UTF-8, so that it probably is 1-byte ASCII with an attitude.

For a cool case study of UTF-8 usage, check out the appendix. (Does a blog have an appendix? Well, this one does!)

Guessing the Code Page

But how to guess a Code Page? If a char(128) in a single byte text is preceded by the characters ‘gar’ and followed by ‘on’ then there is a pretty good chance the French garçon is meant, and the 128 is not a Greek Alpha or anything else but ç, so latin1 is in use. But, to safely predict single-byte encodings, you’ll need either a vast compendium of knowledge of the worlds languages (not writing systems!) never before compiled in the history of computers, or an insane amount of luck. Rock and a hard place.

Here as well as in interpreting the data itself, there is no substitute for “Know Thy Data”. And in this case, Thy Encoding. Sometimes you’ll need to ascertain what encoding is used at the source, where the data comes from.

Recommendations

Finally, here are some recommendations for using encodings with Integrator:

- As a rule of thumb, I would say: don’t lose information. If a source is in Unicode, as it more regularly is, keep it that way.

- Should encodings of files be converted? It’s important to note that conversions generally go only up in ‘complexity’ not down. That is, not safely. It is quite unwise to try to convert a UTF-8 encoded source to a single byte ASCII. It might work very well to get anything thrown at you converted into latin1. Until a new person enters the database with a name not spelled in all of the characters supported by latin1. Don’t think ‘we won’t be doing Chinese for a long time’ – it might be much closer than you think.

- Integrator uses UTF-8 internally. It is good practice to save intermediate files also in UTF-8. But, if you don’t use a Unicode enabled version of Diveline (and other DI products), there will be a silent conversion back to 1-byte ascii in the model or cBase. If your source wasn’t UTF-8 or latin1, this is potentially dangerous. (Your Greek alpha will turn into Ç with no clues on what it was before.)

- Watch what’s going into your Integrator Filein object. There is only one setting for the encoding. So, split your file-Ins if you have files with different encodings. Be as explicit as you can and avoid relying on ‘auto’, because other files might change, but that should have no effect on the encoding setting of that particular file.

And, a few side notes:

- There might be texts in encodings that Integrator cannot yet support. Since latin1 is the only codepage mentioned, this is not so difficult to imagine. This isn’t a problem if the whole system is in the same encoding. For instance, a DI customer uses CP1255 (Hebrew). In a sense, Diver doesn’t need to know. Whether a dimension value is ùìåí or שלומ (shalom), Diver doesn’t care. And under normal circumstances, Diver will ask the system locale how to render the attic letters. But even then, it makes sense to convert to Unicode and be prepared for the future to mix with other scripts and create exports that conform to modern standards.

- If a file is delivered in a one-byte ascii other than latin1, conversion cannot be done by Integrator. Conversion can be done by an external tool such as iconv, which is easily housed in a Production® extension, converting it to UTF-8 before processing. Need some help with creating extensions? Check out How to Use DI-Production Extensions.

- There is a difference between interpreting a series of bytes as holding a specific Unicoded character and being able to render that in the interface. A text can be correctly (de)coded and presented, but still have little squares instead of dancing hieroglyphs, because the installed fonts just don’t have the characters. There are font files that support a broad spectrum of Unicode Code Points (called pan-Unicode fonts). But there is maximum: TrueType fonts (most popular of Windows) can store up to 65535 glyphs. The normal “Arial” Font has 3988 glyphs, the “Arial Unicode MS” variant has 50377. But if you want to print your poetry in Old Permic or Elbasan you’ll have to install a specific font for that.

Appendix: UTF-8, a Technical Case Study

Did you ever come across the character à in the middle of a word? For instance, there is a dimension value “José”. Now of course, someone really could have meant to write JosÃ, some Portuguese novelty, immediately copy-righted with ©. But more likely, this is a case of mistaken identity. Mistaken encoding to be more precise. Let’s look into this example a bit more closely. On bit level.

The two bytes represented here by é are xC3 and xA9. Spelled out in bits:

Let assume it is UTF-8 that is used for encoding Unicode characters. Does that fit and what does it say then? UTF-8 stores the first 127 code points (so, characters with number 0 – 127) in the first byte, with the first bit set to 0. The first bit of à is 1, so this is not a 1-byte character. If the Code Point number is bigger, then the next byte is used as well. But how?

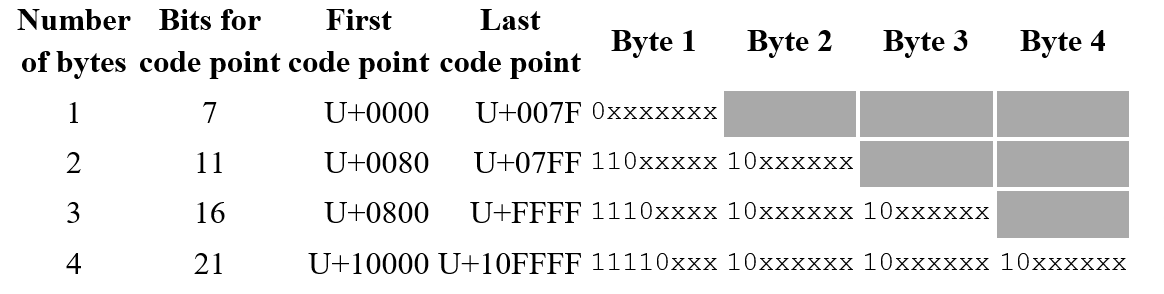

The following chart shows the layout of UTF-8 bytes. Even though an extra byte delivers 8 more bits, they don’t all come in to use for storing the Code Point (the number of the Unicode character from the Unicode table). In a 1-byte representation the first bit is zero and 7 are for code point use, which are the xxxx’s in the chart.

If more bytes are used, than the first bit is set to 1, followed by a 0 bit. In fact, the number of 1’s at the beginning of byte #1 stand for the number of bytes in use for this code point. Also, to denote that the follow up bytes are just that, they start with 10 in the first two bits. So that effectively leaves only an additional 4 bits if a second byte is used. Rather disappointing, but there really is no way around that. Then, for every extra byte 5 additional bits turn up for Code Point Duty. The largest code point that you can store in 4-byte UTF-8 combination is x10FFFF which is 1,114,111 in decimal and the current maximum for Unicode. However, it can be imagined what a still larger number in 5 bytes would look like. Although the architecture has a limit, with every extra bit, the max number doubles.

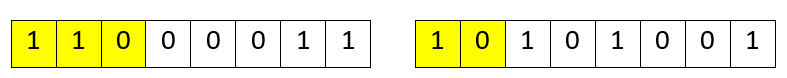

Now, let’s get back to é. We can now match both the first 3 bits of the first and the first 2 bits of the second byte. This could very well be a 2-byte UTF-8 number.

Most likely in reading this file the encoding was set to “latin1”, instead of “utf-8”.Which means the code point itself would be 11 with 101001, which is xE9 or decimal 233. That number in the Unicode list stands for é. So, hey, it says “José”. Makes sense…

- All about Encoding: Latin1, Unicode, and the Swedish Chef - September 26, 2018