DI-Production is a visual tool for developing production scripts. Production scripts help you automate processes. The scripts can automate and schedule simple to very complex processes such as extract, transform, and load (ETL) related tasks like loading data, moving files, and building data models.

DI-Production is a visual tool for developing production scripts. Production scripts help you automate processes. The scripts can automate and schedule simple to very complex processes such as extract, transform, and load (ETL) related tasks like loading data, moving files, and building data models.

But what if you have a task to automate that isn’t part of the standard set of process and control functions? What if you want to ensure that everyone on your development team uses the same solution for a given challenge? That’s the perfect time to create and use DI-Production extensions for standardized, reusable solutions.

DI-Production scripts

In newer versions of Diver Platform, DI-Production is part of the Workbench development environment. In production scripts, there are two main types of nodes:

- Process nodes run out-of-the-box functions such as Builder, Integrator, Copy, Delete, and FTP.

- Control nodes control the logic flow of the script such as with loops and forks.

In addition to these node types, there are extensions. So, what are extensions? An extension is a user-defined, custom process node. An extension can contain any code that you can create and run on your DiveLine server, but an optimal use-case for an extension is when you have a generic piece of code that you want to reuse over and over again and maintain in one place.

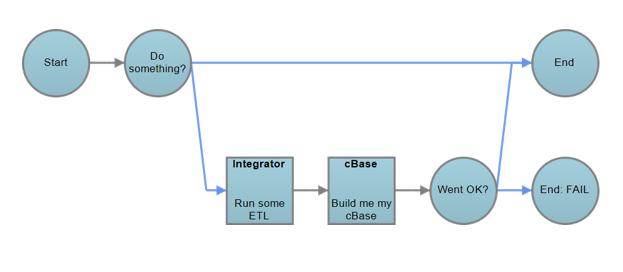

Sample production script

Here’s a sample production script. (See Figure 1.) It starts with a “Start” node, like all scripts, and then there’s a conditional node that will determine whether certain conditions are met, such as whether a certain file exists or a parameter holds a certain value. If the conditions aren’t met, the script ends. If all conditions are met, the script continues to an Integrator process node and then to a Spectre build script node. Then, a second conditional node checks if the Integrator and Spectre nodes ran successfully. If they did, the script ends successfully; otherwise, the script sends an email alert and fails.

Figure 1: Sample production script

Now that we have the script, and it’s ready to be deployed. How do we do that?

The challenge: Deploying with development, test, and production version control

Here we are going to use an example of how one of our European customers is using production extensions. This customer develops scripts in a development environment, and then promotes the scripts through acceptance and then to production. One problem this customer has is how to maintain the script static code as well as the script configuration. The script code is always exactly the same, but the configuration is often different for each environment. For example, a configuration in development can be set to load a limited amount of data whereas production needs to process the entire dataset. Or in development, the configuration can have a personal email address to send failure alerts, but in production the email address is a functional alert for a team of people.

Similarly, when testing in the development environment, the conditional node might need to check for different conditions. For example, the parameter value is probably different in the production environment. But, when you deploy the script to production, you want to promote the exact code that was tested and confirmed to be working in the development environment. You want to promote the code without making any changes, but you want the configuration to match the environment in which the code is running.

The goal is to create static and stable code and be able to roll out that code and manage the different configurations through all environments. That’s the challenge.

Example: Dynamic Configuration Selector extension to manage environment configurations

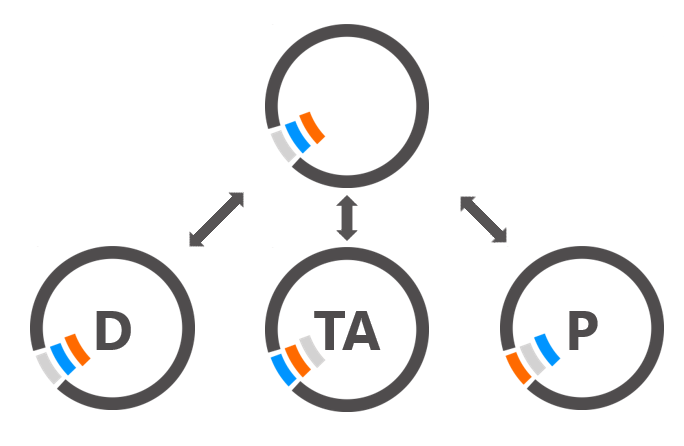

To maintain code across multiple environments, many shops use version control. With this model, you can push static code to the central repository from development and then pull the code from the central repository to the other environments, such as to test acceptance and to production. That way, you can roll out changes or roll them back as necessary. (See Figure 2.)

This model works for the static code, but what about for the configuration, shown as colored bits in the diagram, that is different for each environment? How do you distribute the configuration? Well, what if we store all the possible configurations in the central repository? But, that brings another challenge: how do you get each different environment to pick its own version of the configuration?

Figure 2: Version control of code

A solution this customer has implemented is to have the entire configuration loaded to the central repository and distributed to each environment with the static code. Then, each environment dynamically selects the right configuration. (See Figure 3.) This solution is implemented over and over again in all modules and scripts by using a DI-Production extension called DynaCon, which is short for the Dynamic Configuration Selector. This makes all code and configuration 100% roll out-able with no tinkering at all just by using the extension.

Figure 3: Version control of code and configuration files with dynamic selection

Overview: DynaCon extension

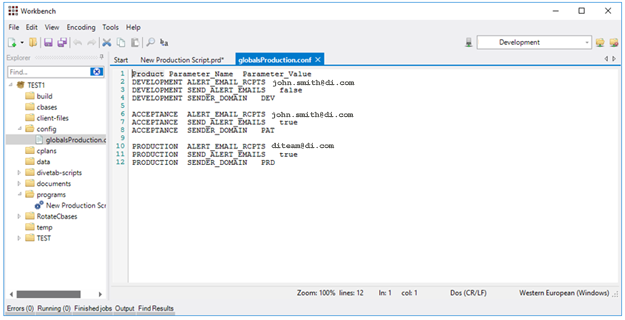

How does DynaCon work? Here’s a quick overview. A configuration file holds all of the entries for all environments. For each environment, the first column holds the environment name, the second column holds a key, and the third column holds a value. In the second column, the keys that are referenced in the code are all the same. In the third column, the key values are specific to the environment. (See Figure 4.)

Figure 4: DynaCon configuration file

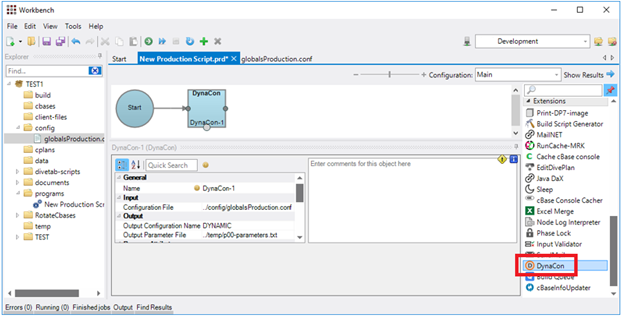

Then, to use the DynaCon extension, which is a single code block, just drag the extension onto the script Task Flow panel. (See Figure 5.) Specify the configuration file as input and a location to save the results file as output.

Figure 5: DynaCon extension in a production script

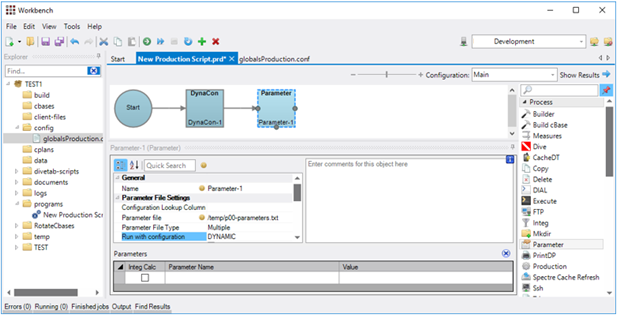

Because a production script that runs from an extension can’t set parameters, there’s one more step to load the results into parameters. Point the Parameter object to the output generated by DynaCon and make markers. (See Figure 6.) Whenever the script runs, it will execute all these nodes.

Figure 6: DynaCon production script passing parameters

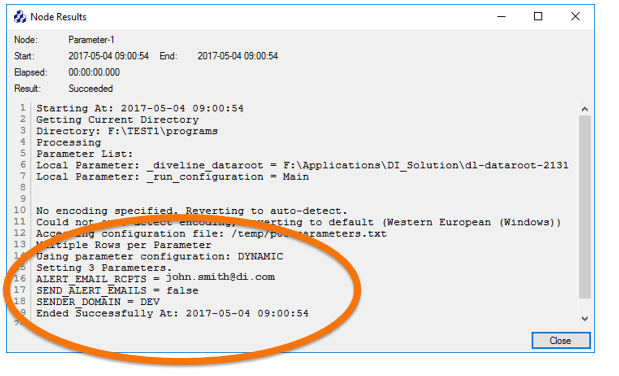

Now, the same code running in different environments sets different parameters on the fly. (See Figure 7.) The extension makes parameter selection fully dynamic for different environments, and it’s very easy to use the extension wherever you need it. In fact, the DynaCon code itself is static and can be managed by version control just like other extensions.

Figure 7: DynaCon output in development environment

Details: DynaCon extension

Let’s look at more of the inner workings for the DynaCon extension and how you install it. In Diver version 7, you can use projects in Workbench to organize your code. The DynaCon extension is in a project called “extensions” where the customer develops and maintains all extensions.

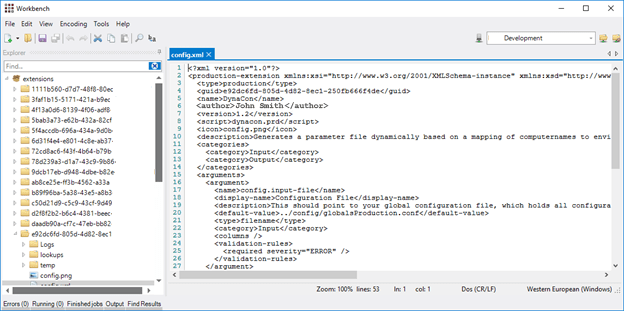

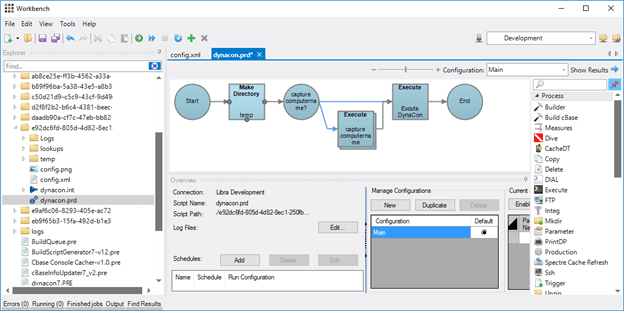

Each extension has a unique global identifier. Also, each extension has a config.xml file, which defines the extension properties such as the kind of extension it is and what script it runs first. In this example of DynaCon, it is of type production and the first script that runs is DynaCon.prd. (See Figure 8.)

Figure 8: DynaCon configuration file

Next is the DynaCon.prd production script. Running in the Microsoft Windows environment, DynaCon.prd captures the %COMPUTERNAME% variable, which is different on every server, and maps it to an environment that matches an entry in the configuration file. Then, DynaCon runs an Integrator script. (See Figure 9.)

Remember, that even though we’re following the example of DynaCon, the extension code can be any code that you want.

Figure 9: DynaCon production script

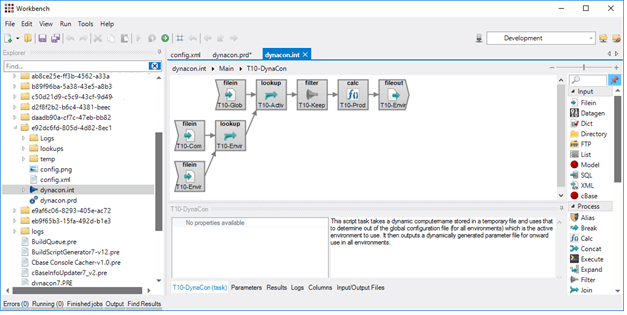

Next is DynaCon.int, the integrator script. DynaCon needs to set parameter values for the environment and the integrator script is where that happens. It loads the specified config file, matches the computer name from the system variable against the local lookup table, filters only the result values that match the current environment, and saves the result to the specified output file. (See Figure 10.)

Now, you have all of the correct parameter values for the environment in which DyanCon is running. That’s how DynaCon works!

It doesn’t hurt to say one more time, the integrator script can be any code that you want!

Figure 10: DynaCon integrator script

Compiling and installing extensions

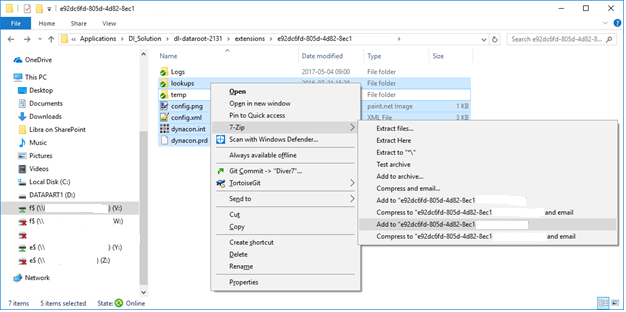

Extensions have to be compiled and installed before you can use them, but this is simple and straightforward. To compile, just go to the directory where the extension code base is located, compress the files, and rename the compressed file.

In this example, you can see that there is a directory corresponding to the unique global identifier of the extension under the extensions directory (See Figure 11). That directory contains the following files:

- prd, the production script

- int, the integrator script

- png, an icon file

- xml, the configuration file

- other files to include in the compile

To compile these files, all you have to do is compress them with a utility such as 7-Zip or other compression utility.

Figure 11: Compile the extension by compressing the files

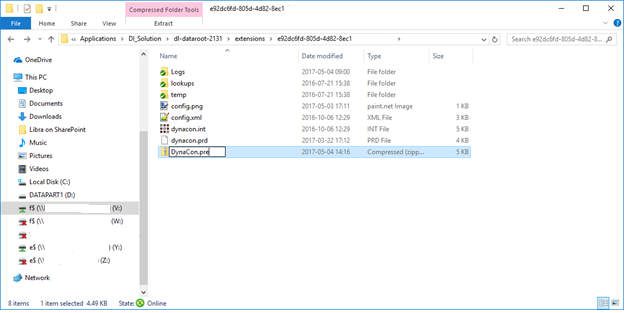

After you compress the files, change the file extension to the .pre file extension for production extensions. (See Figure 12.) That’s it. The extension is now ready to install.

Figure 12: Rename the compressed file with the production extension file extension

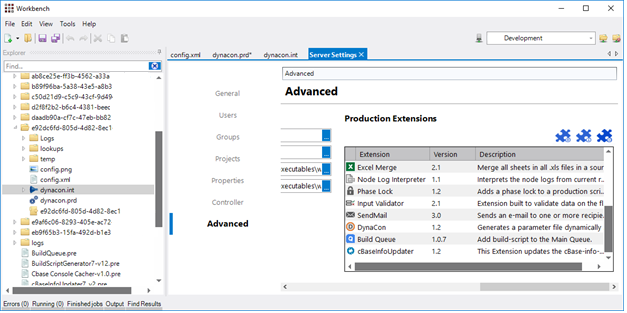

In Version 7, you install extensions from Workbench. Log into the Diver server, and go to Tools > Server Settings. Click the Advanced tab. In the Production Extensions section, there’s a jigsaw puzzle icon with a little plus sign. (See Figure 13.) When you click that icon, you get a dialog box to browse to your .pre files. Select the one you want to install. Click Open, and the .pre file installs and creates the extension.

Figure 13: Workbench production extensions interface

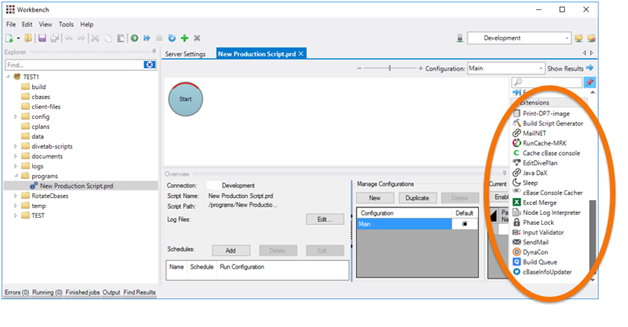

Once installed, your extensions are immediately ready for use in DI-Production. (See Figure 14.)

Figure 14: Installed extensions

One note, when installing extensions, if you’re upgrading to a new version but you didn’t change the version number, you might get a warning message:

“A newer or the same version of the extension with GUID xxx is already installed. Do you wish to force installation anyways?”

This prevents you from inadvertently overwriting your extension. Click “Yes” to continue, or click “No” to revise the extension version number. Use the extension editor in Workbench under Tools > Extension Editor to make any changes.

For more information

For more information about extensions, see the online Workbench help topics. In particular, for step-by-step instructions to update extensions, such as how to change the version number, see the section about updating production extensions.

Example challenges: Extension use cases

DynaCon is just one example of an extension created to solve a challenge and make the code available for reuse. Like DynaCon, extensions are useful whenever and wherever you have generic code that you want to make easy to reuse.

Here are some more examples of what our customer has built and then a bit of detail about each:

- Extracting data

- Sending mail

- Retrieving data from web service

- Pausing a script

Example: Extracting data

To streamline operations, our customer needed to find a standardized way to extract data from a large transactional database system for multiple downstream systems. The extracted data must exactly match the transactional systems. So, the customer built an extension called MaX which guarantees that 100% of the data makes it across with zero duplicates and zero gaps. This process has been running for two years with probably over a billion records extracted. The extension has never once failed.

Example: Sending mail

If you want to be notified when a script has errors, DI-Production extensions is a great way to send alert emails if things go wrong. Or, you might want to receive emails partway through a flow, for example, to select a file and send it to a group of recipients. A send mail extension can do those actions conditionally at any point in the process. An extension can save attachments, if desired, and generate a dynamic recipient lists, which solves that challenge.

Example: Retrieving data from web services

For another challenge, our customer uses an extension to harvest XML-based data from web services. The company calls this the Java data extractor because it targets any system that exposes a web service XML-based data and extracts data from the service. It’s easy to connect to any new or changed web service and upgrade the existing extension. This simplifies a lot of coding and works for any back-end system that exposes data through an XML-based web service.

Example: Pausing a script

Finally, there’s the challenge for when you need production scripts to pause or sleep for a certain amount of time or until a specific time of day, and then continue. The customer built an extension for scripts that needed this timeout ability. The extension is configurable to halt the production script either for X number of seconds or until a certain time, which is specified as HH:MM:SS. This extension can be used to manage resource bottlenecks or for other reasons pause a script’s processing until you want it to resume.

Summary

The possibilities for production extensions are endless. Extensions can prevent duplication of code, rapidly accelerate your development efforts, and make complex operations a simple object that you drag onto a canvas. With a build-once, use over-and-over model, extensions are really powerful, and there are lots of uses for them.

Whenever you build something that might be common functionality, ask yourself do you do this often? Do you want to crack this problem generically? Is this worth turning the solution into a production extension? If the answers are yes, don’t be afraid. Creating and using extensions can become a part of your development team’s skillset to standardize code and streamline development.

- How to Use DI-Production Extensions - October 11, 2017