Diver® Platform

Our core enterprise platform includes everything you need for analytics success

Integration. KPIs. Analytics.

Quickly deploy to achieve fast ROI

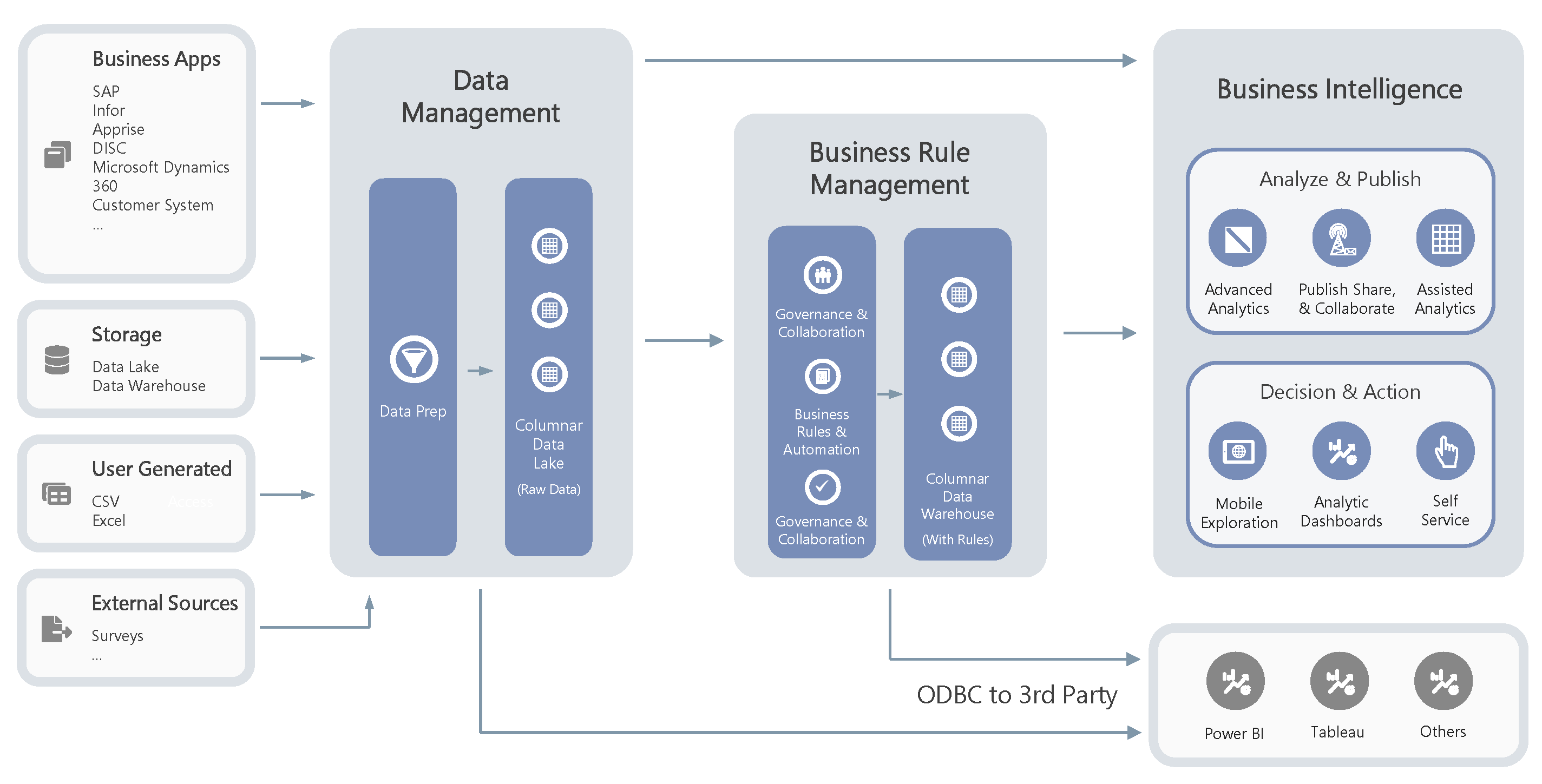

Diver Platform is an end-to-end enterprise platform that includes everything from data integration to data management to business rules management to analytics and visualization. You get everything in one platform; you would otherwise need to purchase multiple products from multiple vendors.

The Diver Platform (Diver) suite combines external and internal data sources to provide historical, current, and predictive role-based views of company data via dashboards and reports. Diver uses an end-to-end process of collecting and submitting data streams for analyses ensuring high data accuracy at every step—integration, KPIs, and analytics.

What can you do with Diver Platform?

Integrate all of your company data into one central source

A brief tour of Diver Platform

For more information

Diver Platform

Make better business decisions that result in increased

revenue, lowered costs, and improved outcomes

What's New in 7.1?

Summary of New Features & Enhancements in Diver Platform 7.1

What would you like to explore next?

Dimensional Insight differentiators

Analytics →

Data management →

Integration →

KPIs →